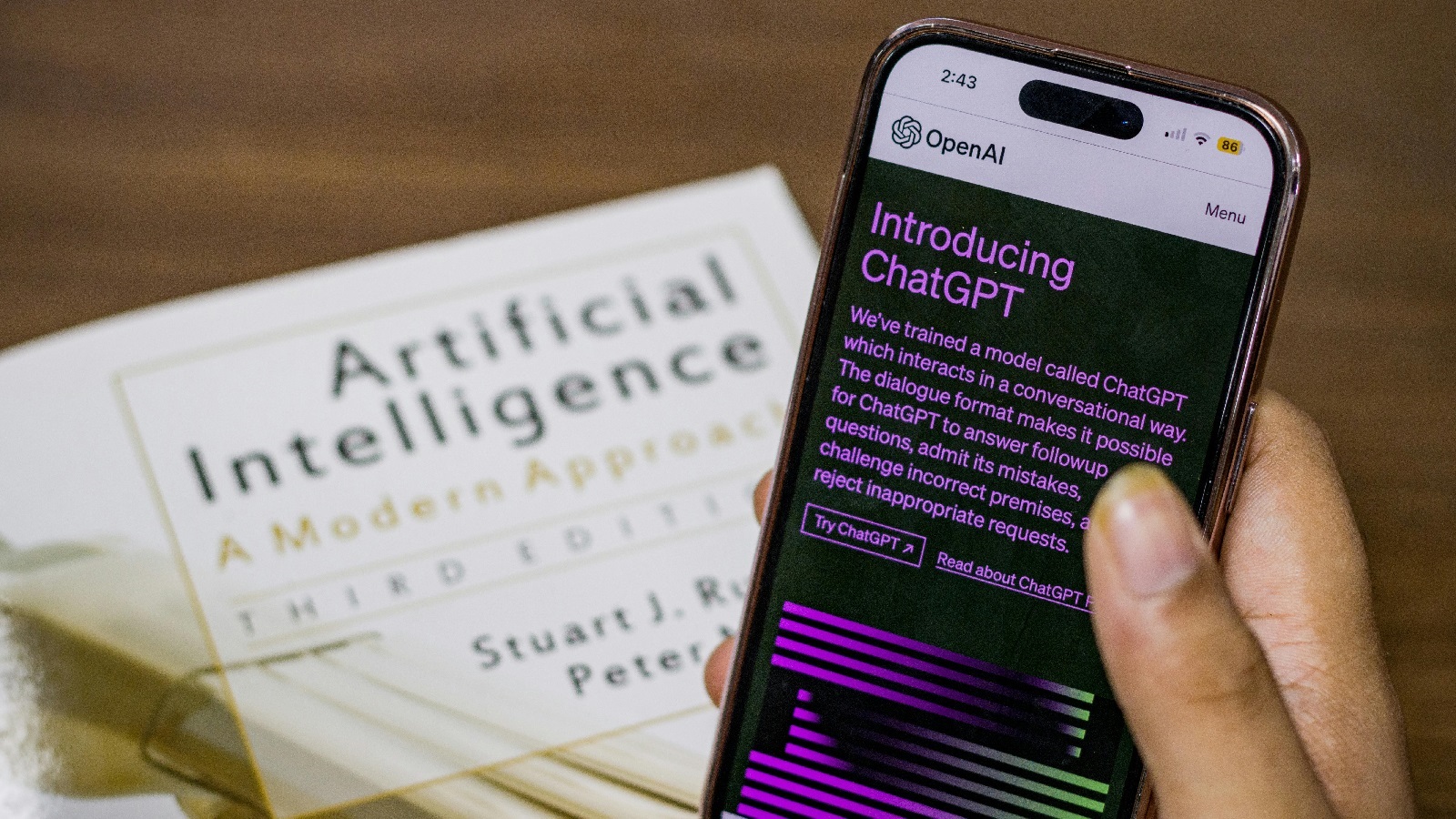

Artificial intelligence (AI) continues to dominate industry discussions and usage is growing

However, many organisations still lack the policies and technical controls necessary to prevent risks, including confidential data leakage.Advertising doesn’t help – depicting super stressed executives making last minute deadlines and negotiating successfully thanks to its capabilities.

AI can significantly improve efficiency. For example, I frequently use Copilot to generate PowerShell scripts that automate repetitive tasks—reducing work that could take hours via a GUI to just minutes. But it can also put a spanner in the works in the form of unregulated, unchecked information. .

Here are some ways that organisations can use AI safely and to best effect:

- Establish strong governance with clear policies, leadership oversight and effective communication of what it can be and what it never should be, used for

- Protect sensitive data throughout model training and deployment

- Secure the AI supply chain by vetting external models and datasets and being explicit that only approved models can be used

- Defend models against threats such as prompt injection and data poisoning

- Continuously monitor and audit model behaviour and security

- Prevent shadow AI by offering safe, approved alternatives and enforcing policy

- Maintain compliance with evolving global AI regulations

A key challenge for SMEs is implementing these controls cost effectively, as they often lack dedicated legal teams or large IT departments to oversee monitoring and compliance.

This raises the question: should SMEs rely primarily on Copilot—given its trusted, enterprise-grade protections—and restrict access to other AI tools, despite the difficulty of doing so at scale?

Ultimately, user education remains the most impactful starting point for strengthening AI security. As the saying goes, many issues stem from a “problem in chair, not in computer” (PICNIC).

Alex Moss | Senior Technical Consultant